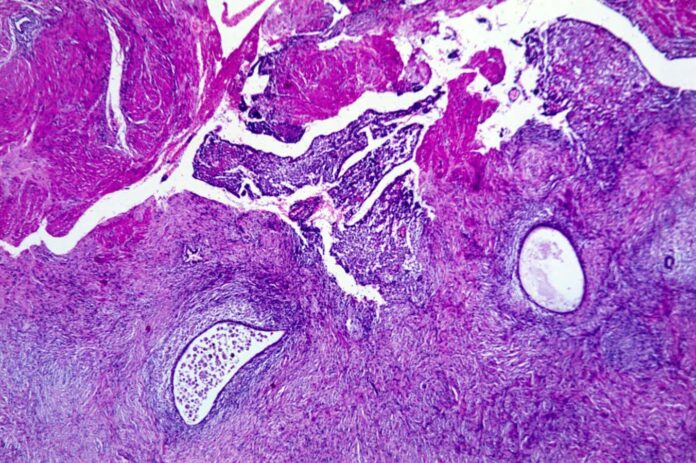

Endometriosis tissue considered below a microscope

BIOPHOTO ASSOCIATES/SCIENCE PHOTO LIBRARY

Low ranges of a specific compound in faeces is usually a signal of endometriosis – and supplementation of that compound would possibly even lend a hand keep an eye on the situation.

Affecting just about 200 million other folks international, endometriosis happens when the tissue lining the uterus grows in different portions of the reproductive tract. There is not any identified remedy, however lesions will also be periodically got rid of thru surgeries as soon as the situation has been identified. Alternatively, due largely to a lack of understanding and figuring out, it these days takes a median of greater than six years for endometriosis to be identified.

Earlier analysis has urged that the intestine microbiome would possibly play a job within the situation. To research additional, Ramakrishna Kommagani at Baylor Faculty of Medication in Houston, Texas, and his colleagues accumulated stool samples from 18 ladies with endometriosis and 31 ladies with out the situation. They investigated the micro organism within the faeces in addition to the metabolome – the set of chemical substances produced by means of the intestine micro organism.

They discovered that the ladies with endometriosis had decrease ranges of the metabolite 4-hydroxyindole of their faeces, most likely because of alterations within the intestine microbiome.

In accordance with that discovery, business stool analyses may just permit fast screening for this broadly “underdiagnosed, understudied and underappreciated” situation, resulting in early and efficient control, says Kommagani.

“Stool is really easy to gather, and it’s no longer invasive like present diagnostic tactics similar to laparoscopy [a kind of keyhole surgery],” he says.

To discover whether or not 4-hydroxyindole would possibly also have a protecting impact, the staff fed supplementary 4-hydroxyindole to a bunch of mice that had tissue implanted of their abdomens to urge endometriosis. After 14 days of remedy, the ones mice didn’t have fewer lesions when compared with keep an eye on animals, however their lesions have been remarkably much less serious, they usually confirmed indicators of getting considerably much less ache.

Additional experiments indicated that after mice with established endometriosis gained 4-hydoxyindole, their lesions massively advanced. The effects have been equivalent in mice that have been grafted with human endometriosis lesions, suggesting the remedy may well be efficient in people as neatly.

“We imagine it is a superb healing possibility, as it’s naturally going on within the frame – no longer a drug or one thing synthesised,” says Kommagani.

Alternatively, greater research in people might be had to verify whether or not 4-hydroxyindole can be utilized to diagnose endometriosis and whether or not the compound is valuable as a remedy.

Subjects: